Eazfuscator.NET is a production tool. This means a lot of people rely on it every day. That fact puts a great responsibility on us.

Eazfuscator.NET has hundreds of automated tests. Nearly everything is tested: obfuscation impact on serialization, reflection, code semantics, user interface etc.

User interface (UI) testing turned out to be a twilight zone of computer science. That's why we had researched and developed a testing technology for user interface that is based on artificial intelligence (AI). And I think it will amaze you.

Quality Assurance and User Interface

Nearly every programmer heard about quality assurance (QA), tests and test-driven development (TDD).

Those are great concepts and methodologies backed by a strong set of tools. As a result, now we have better software than we had 10 years ago.

A lot of manual QA checks in software can be automated. Coded a Levenshtein Distance algorithm? Great! Now you can introduce a small unit test that ensures the correctness of implementation.

However there is a missing part in the puzzle: the testing of Graphical User Interface (GUI) programs.

Sure, there are some solutions available. Selenium is a popular automation kit for HTML. It uses HTML DOM to expose UI layout tree for a test.

There is Automation API in Windows that does nearly the same thing as Selenium in HTML world.

Both Automation API and Selenium represent a model-based approach to UI testing. They operate on document object models (sometimes called UI layout trees) where every UI element such as button, label, drop-down or edit field has an assigned identifier. Identifiers in the test and application are kept in sync, so that the test can reach any control in application's UI.

But there is a catch:

UI Automation Based on Layout Trees Won't Work for You

Actually it will work for simple HTML pages and simple Windows applications. But as your application grows you will start to hit troubles.

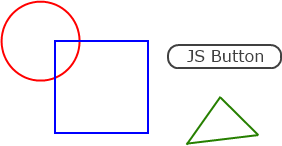

HTML canvas is a great example of a twilight zone for UI automation. Right, the canvas element has an assigned id. The test can reach the canvas element by a known identifier. But the test can't see the content of a canvas. Content is not marked with identifiers, it is just a bunch of drawn rectangles, ellipses and pixels. The test is blind here. It cannot press that JS Button element.

Yes, you may try to use X and Y offsets to do some blind shots there but let's face it: it doesn't work reliably enough to be useful unless your system has a pixel-perfect rendering and real-time responsiveness. This is not the case for current PCs, browsers and operating systems.

Canvas is still a rare element in HTML pages nowadays. So the issue is pretty minor for HTML. But it is totally different for desktop applications.

Every decent desktop application contains tons of custom-drawn UI. And it has no Automation API coverage, of course. That makes its UI nearly untestable.

I am going to ask a simple question: do you test the UI of your desktop application in a way other than manual? The answer will be obviously negative for the most people nowadays.

AI Algorithms Allow to Swap a Human UI Tester With a Robot

Just imagine how cool it would be to swap yourself with a robot. Not only it would considerably reduce the amount of monotonic work but also it would greatly improve the cost, speed and quality of testing.

That's not only a dream. We made it a somewhat working reality in our environment.

Well, most of the times (more on that later).

A Starting Point

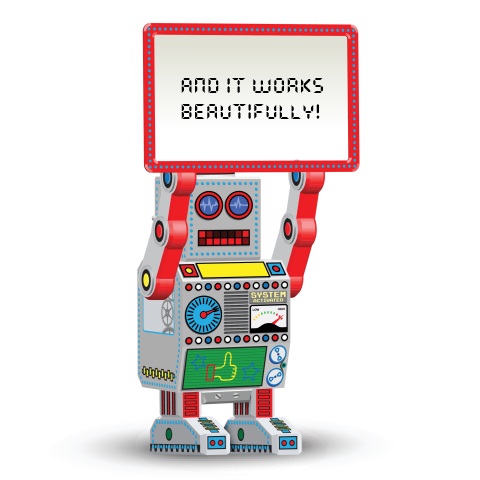

We searched the web for something similar and found Sikuli Script. It gave us a bright idea of how it could be done:

Looks excellent, doesn't it? We tried to use Sikuli Script for our purposes but quickly came to the conclusion that it is rather a toy for automation than a professional testing tool.

What we wanted is a small UI testing framework that could be easily integrated into existing xUnit tests. Another requirement was an ability to test the applications that run on devices and simulators, kind of remote testing. The simplicity of deployment was important too because we saw a classical example of deployment hell in Sikuli Script: Java + ... + OpenCV of specific versions and all that was wrapped in a weak installer that barely worked.

Our Design

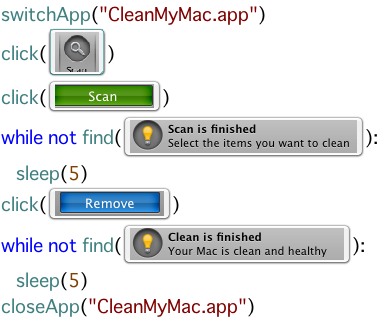

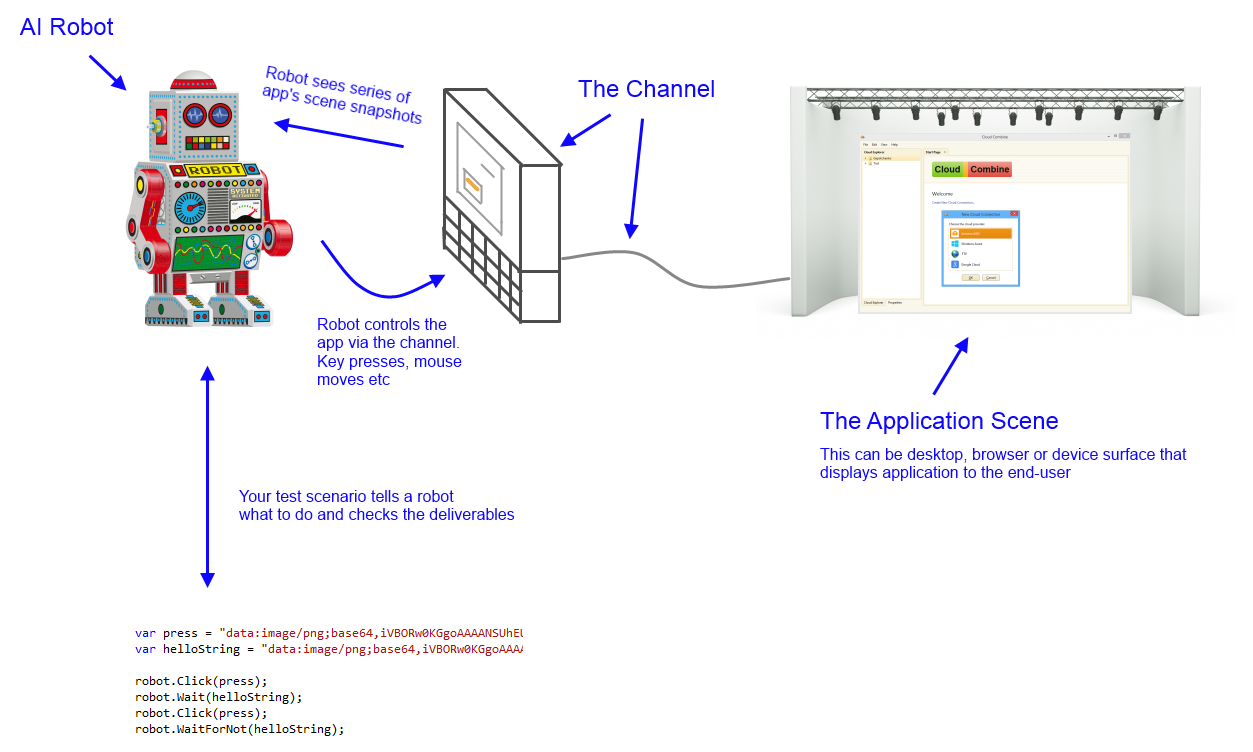

Here is a quick schematics of what's going on in our AI UI testing framework:

You can see at least two important abstractions here: AI robot and the channel. They allow to maximize the flexibility of a testing framework: you can attach or intercept everything you need right from the code of a test. For example, you can provide your own channel implementation and feed the robot with your own images.

Below is a video of AI testing session. Note, that's not me clicking the screens, it's a robot. I'm just starting the tests in xUnit shell:

Delays at the start of every test are caused by project rebuilds. They are performed in order to ensure that we run fresh test assets. This is just a small requirement for Eazfuscator.NET tests. Your tests are unlikely to have it.

Challenges

There may be theme, scaling and font variations between different operating systems. Current AI implementation presumes that a host system has a nearly default theme, DPI and fonts.

We could go further here and implement a more sophisticated pattern matching algorithm. Probably that's a work for the future if we decide to roll out the project as a fully featured product.

UPDATE #1 on August 2, 2015: After trying to run the tests on Windows 10, 20% of them failed due to subtle differences in font and window chrome rendering. This prooves one more time that naïve AI algorithms don't work in the long run. This also pushes me to try to provide the industrial solution to this world-class problem that remains nearly unsolved nowadays.